Create Association Rules for the Market Basket Analysis for the given threshold using R and Groceries(Retail) Dataset

Link to the program and Datasets is given below

Market Basket Analysis helps companies understand how their customers make purchases. The objective is to help to configure sales promotions, loyalty programs, and store layout.

What is Market Basket Analysis?

Market basket analysis studies the concept of affinity. Affinity is the natural liking or understanding of something. It can also mean the degree to which something tends to combine with another. To perform market basket analysis, we need a data set of transactions. Each transaction consists of a group of products that were bought together. Let’s say that I visited a supermarket and bought yogurt, milk, pens, cheese, and paper. These products were bought in a single transaction. The transactions are then gathered and analyzed to identify rules of association.

So now, How can we determine the strength of the association? To answer this question, we need to consider three metrics:

- Support: This metric is indicative of how frequently the products appear in the data set. For example, if we have ten transactions, and pens appear in seven transactions, then the support is 7/10, which is 70%. High support percentages are preferable, as they indicate that the association is likely to apply to a large number of future transactions.

Support (X -> Y) = Support (X ∪ Y)

- Confidence: This metric is indicative of the probability that transactions that contain a certain product (X) will also contain product (Y) {X -> Y}. So the confidence of X -> Y = Pr(X&Y) / Pr(X). The issue with this metric is that the confidence might be inflated if both X and Y are popular or frequently purchased products. So, we need to find a way to control the popularity of Y.

Confidence (X -> Y) = Support (X -> Y) / Support (X)

- Lift: This metric is indicative of the probability of Y being purchased when X is purchased while controlling the popularity of Y. To control the popularity of Y, we need to measure the probability of all the products in a rule occurring together and divide it by the product of the probabilities of the products as if there was no association between them. For example, if milk and cheese occurred together in 2% of all transactions, milk in 15% of transactions and cheese in 5% of transactions, then the lift is: 0.02 / (0.15 * 0.05) = 2.7. A lift value that is equal to one indicates that products X and Y are independent of each other. We should look for lift values greater than one because these values mean that item Y is likely to be bought if item X is bought. The larger the lift, the greater the link between two products.

Lift (X -> Y) = Support (X -> Y) / Support (X) * Support (Y)

Market Basket analysis aims to give answers to the following:

- What are the Purchase Patterns? (Items purchased together/sequentially/seasonally)

- Which products might benefit from advertising?

- Why do customers buy certain products?

- What time of the day do they buy it?

- Who are the customers? (Students, families etc)

Working of Apriori Algorithm

Finding frequent item-sets is one of the most investigated fields of data mining. The Apriori algorithm is the most established algorithm for Frequent Item-sets Mining (FIM).

Example:-

Definition of Frequent Item-sets

A set of items that appears in many baskets is said to be “frequent.” To be formal, we assume there is a number s, called the support threshold. If I is a set of items, the support for I is the number of baskets for which I is a subset. We say I is frequent if its support is s or more.

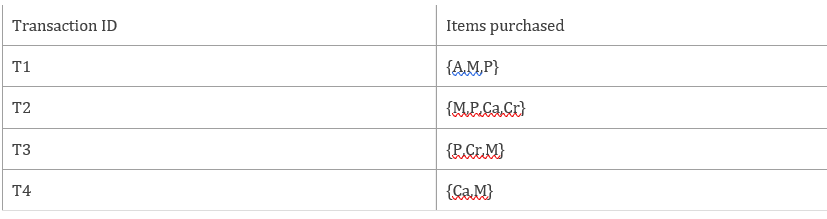

Let’s consider a simple example. Consider the transactions for the following items

Next consider the rule that item/itemset is frequently purchased if it occurs at least 50% of the times. So here it should be bought at least 2 times.

For simplicity, let’s abbreviate the items as follows;

Apple-A

Mango-M

Pears-P

Cabbage-Ca

Carrots-Cr

So the table now becomes

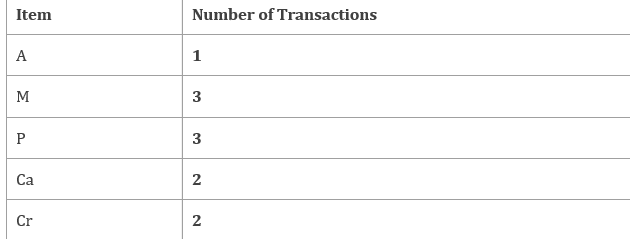

Step 1: Count the number of transactions in which each item occurs

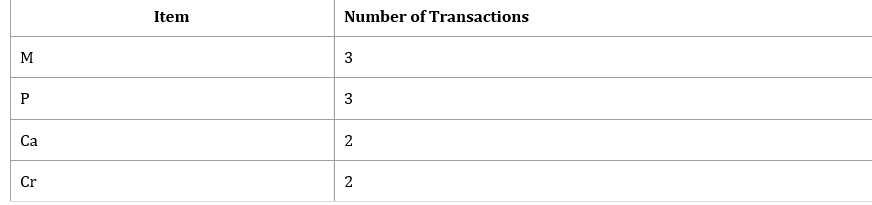

Step 2: Now remove all the items that are purchased less than 2 times. So the new table becomes

Step 3: Start making pairs of the items from step 2 with each other

Note: Itemset PM,CaP, CrP are the same as MP, PCa,PCr so they are not included in step 3.

Step 4: Now we count how many times each pair as shown in Step 3 occurs in Table 1.

Step 5: Look at the question- it states that consider the itemset that is purchased at least 2 times or 50% of the times.

Applying this rule on Step 4 will reduce the table to the following;

So this table shows that the following items MP (Mango and Pears), MCa (Mango and Cabbage) and MCr (Mango and Carrots) are purchased together at least 50% of the times.

I hope that by now you are somewhat clear on how these notations are used in machine learning. If we simplify the above into words, then we can say that:

We want to build a linear model with the combination of features in x and predict the scalar values in y.

When we consider a simple linear regression, that is, a single x and a single y, then we can write,

y=β0+β1x

where β0 is the intercept parameter and β1 is the slope parameter.

If you analyze the above equation, then you may find that it is the same as the equation of a line:

y=mx+c

where m is the slope and c is the y-intercept.

For now, we just need to keep in mind that the above equations are for single-valued x. We will again come back to these to learn how actually they are used in machine learning. First, let’s discuss what is simple linear regression and what is multiple linear regression.

Program for implementing Market Basket Analysis using Groceries Dataset:

Step 1: Load the necessary packages.

First, we will load the packages required for our program.

- Arules – Provides the infrastructure for representing, manipulating and analyzing transaction data and patterns (frequent itemsets and association rules).

- ArulesViz — Implements several known and novel visualization techniques to explore association rules.

- Datasets — This package contains a variety of datasets.

Step 2: Read the Groceries Dataset

Step 3: Now, Let’s take a look at top 10 items from our dataset

Step 4: The final step is to generate the rules with the corresponding support and confidence using the Apriori Algorithm in Arules library

Input:-

Output:-

Step 5: Now remove the redundant rules present in the dataset

Input:-

Output:-

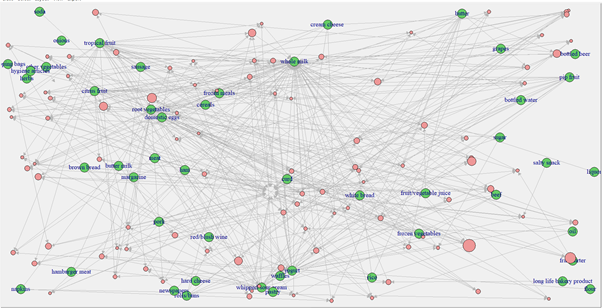

Step 6: Finally display the graph for the Market basket analysis / Association Rules.

Click here to download the Program and Datasets…

About Us

Thinksprout Infotech is leading IT Solutions company providing excellent services with great efforts. The Company also deals in Online Application and Custom Software Development. Moreover, we have an extensively experienced team in programming databases and back-end solutions. In the same vein, we develop user-friendly applications for our clients for better operations and outputs.

Partnered with

About Us

Thinksprout Infotech is leading IT Solutions company providing excellent services with great efforts. The Company also deals in Online Application and Custom Software Development. Moreover, we have an extensively experienced team in programming databases and back-end solutions. In the same vein, we develop user-friendly applications for our clients for better operations and outputs.